The Psychology of Cybersecurity: How Our Minds Distort Our Perception of Cyber Risk

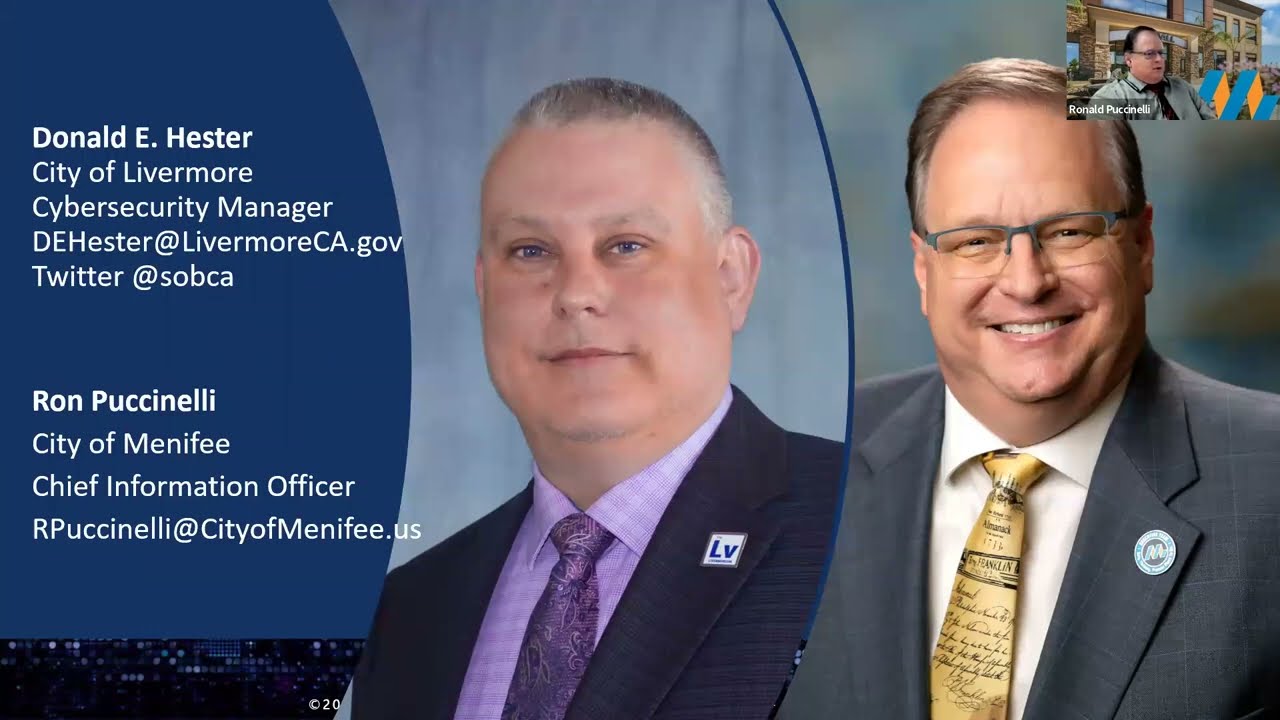

- Donald E. Hester

- Mar 8, 2023

- 5 min read

Updated: Mar 24, 2023

When I attended the RSA conference in 2022, I tweeted about the intersection of psychology and cybersecurity. While most of the responses were related to the psychology of criminals or awareness, I believe there is a vast area to explore when it comes to psychology and cybersecurity. It is crucial to understand the psychology of threat actors, encourage cyber-safe behavior, and identify social engineering tactics to avoid becoming victims. However, what often goes unnoticed is the role of psychology in how we perceive and respond to cyber risk.

Recently, Jen Easterly, the Director of the Cybersecurity and Infrastructure Security Agency (CISA), made a statement highlighting the need to take unsafe technology seriously. She emphasized that insecure systems pose a more significant threat to organizations than Chinese spy balloons, which have been dominating news headlines. Despite this, the public tends to focus on the more sensationalized events, which is a textbook example of what Daniel Kahneman discusses in his book Thinking, Fast and Slow.

In chapter 13, Kahneman explains how we tend to misinterpret data and overestimate the likelihood of events that have a high emotional impact, happened recently, or are talked about frequently. This bias can affect how we prioritize cybersecurity risks and create distorted perceptions of reality. For instance, in the aftermath of an earthquake, people are more likely to focus on preparedness. However, as time passes, they become less concerned and less prepared.

Several factors influence our perception of risk, such as how often we hear about something, its emotional impact, and how soon after an incident. It is essential to manage cyber risk based on facts rather than gut intuition, which can be dangerous. As an auditor, I have seen many managers ignore cybersecurity risks unless they have experienced a cyber incident. This approach leads to distorted priorities and a lack of preparedness.

One of the most significant cognitive biases that affect our perception of risk is the neglect of probability bias. This bias causes us to overestimate or underestimate the likelihood and severity of a risk based on factors such as the emotional impact, vividness, or recentness of the event, rather than a rational analysis of the underlying statistics or probabilities. This can result in suboptimal decisions about how to manage the risk or allocate resources to prevent or mitigate it.

This bias is related to the availability heuristic, which is the tendency to judge the frequency or likelihood of an event based on how easily it comes to mind rather than on statistical or probabilistic reasoning. When people rely on vivid or emotional cues to judge probability, they may neglect to consider other relevant factors, such as the base rate or the statistical frequency of the event in question.

For example, people may be more afraid of flying than driving, even though the risk of dying in a car accident is much higher than the risk of dying in a plane crash. This is because plane crashes are more vivid and emotionally charged events that receive more media attention and are more salient in people's minds than car accidents, which are more common and less sensationalized.

Another bias that influences our perception of risk is the availability cascade.

This bias occurs when we are repeatedly exposed to related information or stories, even if the actual likelihood or severity of the risk is relatively low. This can lead us to overestimate the prevalence or importance of the risk and take disproportionate actions to address it.

One major source of the availability cascade is the news. News sources often prioritize stories that will get the most views or clicks, which tend to be novel or emotionally impactful stories. This creates a false sense of prevalence and drives emotional intensity, which can result in disproportionate attention to certain risks.

For example, consider the recent news coverage of spy balloons versus the risk of insecure systems. While insecure systems pose a much greater threat to organizations, the spy balloons have received far more media attention. This is a textbook example of the availability cascade, where repeated exposure to spy balloon stories creates a false sense of prevalence and emotional intensity.

This bias can have serious consequences, as it can lead to a misallocation of resources and efforts. To combat the availability cascade, it is important to seek out diverse sources of information and critically evaluate the actual likelihood and severity of risks rather than relying solely on the information presented to us.

Additionally, associative coherence can shape our perception of risk by influencing how we organize and interpret information related to the risk and by affecting our ability to integrate new information or consider alternative perspectives. This can result in overlooking important factors or alternative risk management options.

It is important to approach risk management with a scientific mindset, much like insurance underwriters do. Actuarial tables and statistical models are used to determine the likelihood of an event occurring, providing a more objective and unbiased view of the risk. A perfect example of this is highlighted in an episode of the TV show Bones, where Dr. Temperance Brennan initially believed that her above-average intelligence would make her less likely to die before her husband, leading her to challenge the high life insurance rate. However, after reviewing the statistics, she conceded that the insurance company's calculations were correct. By relying on data-driven approaches rather than gut intuition, we can better understand and manage risks in a more effective and efficient manner.

In cybersecurity, communicating risk to management can be challenging as many managers tend to ignore cyber risks unless they have experienced a cyber incident. The gut intuition that some managers have, that "it hasn't happened in my career, therefore it is not likely," is a dangerous attitude to have. This leads to distorted priorities and ineffective risk management. Instead, organizations should manage risks by facts, such as actuarial tables or statistical modeling, which is less biased.

Psychology is critical in how we perceive and respond to cyber risks. Understanding cognitive biases, such as neglect of probability, availability cascade, and associative coherence, can help us make informed risk management decisions. Organizations should manage cyber risks by facts rather than gut intuition and prioritize risk management to prevent insecure systems rather than focus on the latest headline-grabbing cyber incident. Building awareness of these biases and their potential impact is essential to be more reflective and critical in our thinking about cyber risks.

Being unaware of cognitive biases can actually increase the risk of a company or organization. When managers or decision-makers are unaware of the biases that can influence their perception of risk, they may fail to appropriately mitigate real risks. For example, if a manager ignores a cybersecurity risk simply because they have not experienced a cyber incident in their career, they may be underestimating the true risk and leaving their organization vulnerable. This type of bias can lead to distorted priorities and can fail to allocate resources to the most pressing risks. By being aware of cognitive biases, managers and decision-makers can work to minimize their impact and make more objective decisions based on facts and evidence rather than intuition or emotion.

In this post, I have outlined how cognitive biases can impact our perception of cyber risk and influence how we respond to it. I also explored how biases can lead to distorted priorities and a lack of appropriate risk mitigation measures. It's important to be aware of these biases and think like scientists or insurance underwriters to ensure we manage risks based on facts and statistics rather than gut intuition or emotional reactions. By doing so, we can better protect ourselves and our organizations from cyber threats.

Resources

Thinking, Fast and Slow by Daniel Kahneman

Your Deceptive Mind: A Scientific Guide to Critical Thinking Skills By: Steven Novella, The Great Courses

Availability Cascades and Risk Regulation

Timur Kuran and Cass R. Sunstein

Stanford Law Review

Vol. 51, No. 4 (Apr., 1999), pp. 683-768 (86 pages)

Published By: Stanford Law Review

Comments